misha writes:

Denys Hsu is a 3D Artist & Indie Developer in Karlsruhe, Germany. He has created a wonderful plugin for Blender called BlendArMocap, which essentially uses a webcam to motion capture hands, head and body and easily transfer to a rig...The plugin is tiny, about a half megabyte, yet is powerful enough to capture intricate motion and save it to Blender layout.

Motion capture render of Australian Aboriginal in Blender RenderMan renderer

The plugin incorporates Google Mediapipe research code which can capture hand gestures, face motion and body motion.. But there has never been a bridge between the Mediapipe research code and animation software....until now. Based on Google Mediapipe research code, BlendArMocap provides the link between Mediapipe and Blender rigged animation.

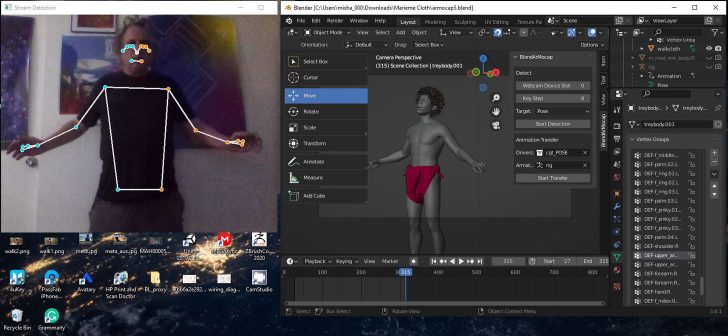

Screen capture of the webcam sensing the body joints and the Blender interface with rigged model

Using the plugin is a breeze. Just one button opens a webcam window and records the motion. The plugin uses Google Mediapipe code to grab the hand, face and body motion and transfers the animation to Blender. This plugin has the potential that has been obvious for many observers of the Google Mediapipe code, yet has never manifested as a usable program until now. This is a godsend program that democratizes the mocap element of animation. Without the expense of mocap gloves or markers, facial markers or face cams and mocap suits, this program uses a webcam to get the job done. While this plugin is in beta, the possibilities are very exciting. This marks the development of motion capture software utilized in Blender that has far-reaching consequences. As the Mediapipe code develops, the plugin can develop with it and be a true solution to easy and accurate mocap.

No tedious registration, the recording starts with the first pose. Rigging is provided with the Rigify plugin, which works well with human characters. once the rig is applied and properly weighted, the mocap is transfered to the rig, and results are instantly visible in the viewport.

Installation must include python code to run efficiently. At this time, administration privileges must run Blender. The plugin is meant to be used with a fully rigged character in Blender. The software transfers the motion to the rig very quickly. Previews can be played in the viewport. The small footprint of the area of capture eases the preparation of a large capture space. One can motion capture literally at the workstation. Of course, the resulting animation can be edited in the graph editor and pose editor for further refinement.

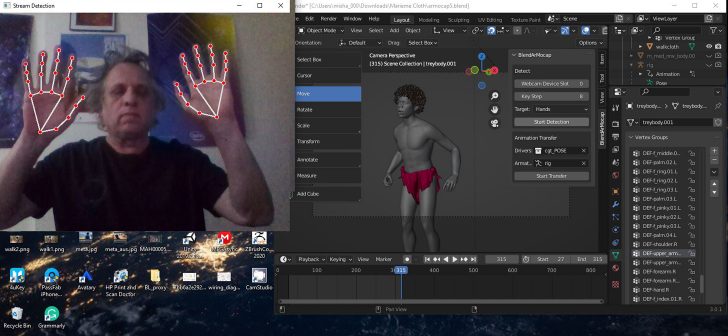

Screen capture of the webcam sensing the head rotations and the Blender interface with rigged model

Tests I ran with the beta have achieved amazing results. Recording using subtle hand gestures was very easy: just move one's hands and the program records the movements. The same with arms, head and body. The Mediapipe software was meant to be simple to use: with a cellphone or webcam. The code Denys created builds on the simplicity and incorporates ease-of-use into the Blender plugin.

Denys Hsu has already created a very complete project, even in beta form. Hopefully, more people will recognize his potential in this project.

I presented live with Denys "Motion Capture with Your Webcam, BlendMocap addon and how to use it" at World Blender Meetup Day, March 19th, with the Los Angeles Blender Users Group (LA.Blend). I was scheduled from 10:30 to 11am Los Angeles time.

14 Comments

Awesome plugin! A game changer for 0 budget projects. The beta really is promising, I encourage giving a tip to the developer!

Very interesting to see these kind of developments. Hardware based motion capture may soon become a thing of the past!

I'm wondering how this compares to the Freemocap (freemocap.org) project. That's also in active development and uses OpenPose, but is more difficult to setup. It also requires at least two camera's and a reference board, but the results are really amazing. Definitely looking forward to also trying out BlendArMocap.

What can I say… a brilliant and talented Mind at the service of the community.

Good luck with the next steps and thanks for contributing to the community

Having seen it here, I'm less inclined to question my eyes, or the possibility of deep fake. I watched it on the World Blender Meetup and wondered if I was the only one who noticed what looked like a black guy in a diaper. Unlike the other examples of motion capture. The demonstration went on as though either no one noticed or wanted to say anything about it. Rather than interrupt what seemed to be a degree of obliviousness I didn't watch it all, because it was distracting. But, why? Having seen other black presenters as well, I wouldn't conclude that it's a widespread tone deafness, due to so much evidence to the contrary. But distracting to say the least.

Was it really just me?

"Was it really just me?"

Yes.

Ok... I don't think it's a "black guy in a diaper", but rather a australian aboriginal in his traditional outfits... Don't question your eyes, question your knowledge ;)

My knowledge is of Black people being put in cages and displayed as zoo animals:

https://www.bbc.com/news/magazine-16295827

My knowledge is as a multi-ethnic man in America who traces his roots back to Europe and Africa. As such, I'm sensitized to discrimination and prejudice in America. We do, after all have the KKK in our history as well as COINTELPRO a governmental effort to discredit Martin L. King Jr leader of the Civil Rights Movement. As well as the Tuskeegee experiment, the Dred Scott Supreme court decision in which blacks, for purposes of valuation were determined to be 3/5ths human, the Loving decision which overturned laws to prevent racial intermarriage by declaring it illegal, as well as Jim Crow - separate but equal, National Geographic used to be rife with tribal nudity - perhaps considered natural. I looked at it and asked what's the difference between a nude non-white and a nude white woman? Seemed equally, perhaps tone deaf.

My knowledge has a different context. My knowledge also informs me that comparable things have happened in Canada with their indigenous population as well as in Australia, and certainly in America.

I looked for context in the demonstration. I might have missed it. I watched the opening of the Sydney Olympics and was deeply moved by the inclusion of the original people of Australia - the Aboriginals in the ceremonies.

My reaction was not to spread it on social media as an example of prejudice and discrimination but to express my concern given my perception of it. The reaction of others put me off as to whether or not my perception was mine alone - in ignorance - or was an accurate perception.

I looked for signs of context that would explain and help me understand what I was seeing. Your explanation clarifies that for me and I appreciate it. Context is everything, and knowledge is a part of that context. I thank you for that. You, in your demonstration, felt no need, that I could see to explain it. Everything I read about this demonstration, on World Meetup, points me to Los Angeles:

Los Angeles Blender Users Group (LA.Blend) Los Angeles

Los Angeles the home of the South Central riots after the video of the beating of Rodney King and the non-guilty decision against those who did it. Also the home of Reginald Denny who showed enormous humanity in his decision to forgive those who took their anger out on him throwing a brick at him and causing severe brain damage.

So, yes, context and knowledge are important. You've provided both. And I've provided ample reason why I, and others, absent that, might have been equally inclined to misconstrue what they were seeing.

I will do a better job next time.

Thank you.

I also did image searches of the man in the video shown here as well as elsewhere on Blender Nation hoping for context as well as background searches on the presenters cgtinker and Mike Amron here. And found nothing that might've otherwise informed me. I might've been ignorant in my original comment, but, not for lack of trying.

Ok, sorry if my message was insensitive, I didn't mean to lecture you or hurt your feelings. Just wanted to clarify things, as the first picture of this article is annotated as "Motion capture render of Australian Aboriginal in Blender RenderMan renderer", Denys Hsu (CGTinker) and Mike Armon (Misha) also precised it during their presentations.

Also, I'm aware of all the horrors and injustices you describe (and sadly so much more, day after day...), and I really don't think Mike Armon had such intention as to put on an "indegenous freak show". I've been following his test on CGTinker discord channel and it seems to be part of a larger project he's developping about the Australian Aboriginal, it was not just for the Blender World Meetup. So yeah, lack, but not absence, of context, wronged your judgement, and I can understand your first reaction if you didn't get those informations. The presentation was about the technical wonder CGTinker is creating, more about the "how" than the "why" Misha made this usage of it, so the context was not spotlighted.

(By the way, sorry if my english is not perfect! Corsican here ;) )

...just saw these comments...my goal was to use that character for demos because it clearly shows motion capture without clothing simulation to worry about and to use an indigenous character, I thought, would be great since those characters are rarely used in demos. Most motion capture demos use a robot or armored character etc. I thought my character would demonstrate cgtinker's add-on clearly and accurately.

My misunderstanding. Props for using indigenous people: aborigines.

I have a question, I am using this with a regular webcam and I have a kinect v2 also, it's not working with that, have you tried it?

...I have not tried a kinect mocap solution in quite a while....there is no need to use a kinect, a regular webcam will work fine, although a phone or camera connected to usb also works, if you set the Webcam Device Slot to a different number.....

How to make it work with custom rigs? i know rigify humans work great but what about our own created rigs>?