This script will help you speed up rendering of a single frame by having multiple computers render parts of it.

skororu writes:

There seem to be plenty of options for the distributed rendering of animations, but far fewer options for sharing the task of rendering a single frame amongst local machines (and none that worked quite how I wanted), so I wrote one in Python 3.4, you can obtain it from here.

It's easily configurable and run from the command line: in the config file just add the IP addresses of your render nodes, image resolution, Cycles seed and frame number. No complicated master/server arrangements to maintain, everything is done over ssh.

- It's pretty tolerant of being interrupted, and when run again will restart without losing any tiles already rendered

- It benchmarks the supplied nodes (caching the results) to work out the right number of tiles needed to spread the load fairly over the available nodes, minimising idle time towards the end of the render.

- It handles distributing Blender files and supporting textures to render nodes, collects rendered tiles, and seamlessly stitches all the tiles together into a png file at the end.

The script was developed on OS X, but should run on any *nix system with Python 3.4, it should be able to use any *nix type system as a render node. The script is still under development, but it's pretty usable in its current form.

Give it a try and let us know how it works for you!

22 Comments

YyyeeeeeaaaaaaaayyyyyYYYYYYY!!!!!!!

Windows version, someone?

With Cygwin installed on the Windows machines, and Cygwin's ssh daemon configured and running on any Windows render nodes, getting this to work sounds quite plausible. There are some subtle differences in behaviour when using Python's multiprocessing on Windows, but no show-stoppers that I can see from looking at the docs, it might just need a few minor tweaks.

Unfortunately, I don't have any Windows machines to actually test it on atm.

I am very close to getting this working on Windows via Cygwin, courtesy of VirtualBox. There's just one minor issue left to sort out, and I should have that sorted in a day or so. Most of the core commits have been made and are up on GitLab already; the documentation will follow shortly.

Tested and working on Win XP Pro and Cygwin 2.2.1.

Quick update: the script has now been tested on Linux, and works just fine (Ubuntu 12.04.5).

Any Video to watch it in action?

I haven't done one yet, but I'll take a look at that - it will probably end up getting posted on Imgur.

https://imgur.com/Q0XBUtO

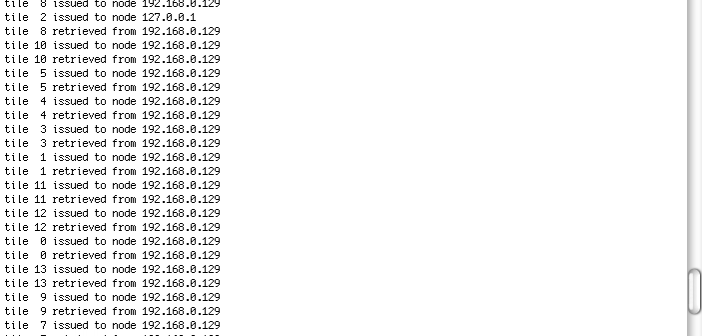

It shows unzipping the source downloaded from GitLab, editing the config file to add some render nodes and setting the image size to something very small just for demo purposes, and then running the script.

I've edited out some of the time waiting for the benchmarks to complete on the remote nodes.

The original GIF I posted seems to play back very slowly on Imgur for some reason, best to use this one instead:

http://imgur.com/SMDjlEs

Any other related videos will be posted in this album:

https://imgur.com/a/5c4zH/all

perfect job

ty!

Now tested and working with the Raspberry Pi 2 (Raspbian 8.0), and much friendlier to flash based filesystems.

Thank you very much for sharing this script! Just what I was looking for :) It works well also with python 3.5.

Just a few comments:

* You could determine the executable path instead of hard-coding it (especially because the script fails without telling why if the path is not set correctly). This could be done in a portable way with distutils.spawn:

import distutils.spawn

BLENDER_BINARY_LOCATION = distutils.spawn.find_executable('blender')

* Is there a reason why you try to find the distribution of tiles over nodes in advance? Why not just use a relatively small tile size (which will render in 10 min. for example) and put all tiles in a queue, then distribute the next tile to the next available node? This would distribute the load better if there are some tiles which render significantly faster then others (e.g. very different levels of details).

And I was wondering: I tried it with two nodes, one of which is faster than the other. The resolution of the image was 1920x1080, but it got split up in only two tiles. Consequently the faster node finished way before the slower one.

Thanks again and keep up the excellent work!

Cheers

Raimar

Thanks for the feedback; very helpful!

The distribution of tiles over nodes calculation at the beginning is only used to determine the minimum number of tiles to split the image into, which (as long as there is more than one node) is doubled to compensate for the variability between benchmark and full quality renders. The tile numbers to be rendered are all contained in a set, and are dished out on demand as nodes become idle.

I'm a bit curious with your two node setup, why only two tiles are being allocated... I'll pop a diagnostics module in to the source sometime next week, which should make tracking the issue a bit easier. As a workaround, you can manually force a higher number of tiles in dtr_user_settings.py:

For example to set 32 tiles:

# Render_config(IMAGE_X, IMAGE_Y, CYCLES_NOISE_SEED, FRAME_TO_RENDER, USER_TILE_SETTING)

image_config = RenderConfig(3840, 2160, 0, 1, 32)

Out of curiosity which platforms are you using?

Ah sorry, I only had a quick look at the code when I tried to find out why only 2 tiles were generated, and I clearly interpreted it wrong. Thanks for clarifying.

My setup:

* Node1 Arch Linux, Intel i3 2.67 GHz Quadcore

* Node2 Arch Linux, Intel i7 1.7 GHz Quadcore (still faster than Node1 for some reason)

* Node3 OSMC on a Raspberry Pi 2, for testing

If I take only Node1 and Node2 I get 2 tiles. If I add Node3 I get 32 tiles, but Node3 only works on one:

node tiles mean duration

Node2 22 0:01:22

Node1 9 0:03:07

Node3 1 0:22:33

So probably the best setting for now is to only take Node1 and Node2 and set the number of tiles to 32 manually, thanks for the hint.

I suspect this may be related to an issue reported a few months ago, but I couldn't reproduce the issue at the time with the information provided: https://gitlab.com/skororu/dtr/issues/1

I've amended the source so it generates some more information in the area where I suspect the issue may be. If you have the time, would you be able to download the current version, and post the output (having let the script decide the number of tiles on its own) please? It would be great to squash this bug.

Sure, glad to help. I will respond to the issue on gitlab.

Thank you very much - I picked up the info you posted on GitLab.

I'll have a look into using distutils.spawn too; I didn't know that was there, hiding in the standard library.

"You could determine the executable path instead of hard-coding it"

The script now checks where Blender is located on the remote nodes, runs a test to ensure its executable, and reports if it's not found, or not working.

It tries 'which blender' first, and if unsuccessful, reverts to checking the hard-coded locations in ~. The former gives good results for Linux distributions where Blender is installed via a package manager, and the latter deals with unusual cases such as (1) newer versions of OS X, where the ssh daemon seems to lock down the path setting options pretty strictly (ssh environment and user $PATH settings appear to be quietly ignored), and (2) installations for Cygwin/Windows.

Thanks again for the suggestions!

Hi there,

I'm trying to set this up in a completely windows/cygwin environment. I must be on the right track because I was able to get the benchmark to work successfully. However whenever I try and do an actual render I get an error right off the bat.

Here's the output:

$ ./dtr.py

>> normal start

>> checking user settings file

>> checking if render nodes are responsive

>> benchmarking

using cached benchmarks for all nodes

check session ident: 3208459136

cached: node blender1.frayed.home benchmark time 0:00:28 active

1920 * 1080 image, frame 1 with seed 0 will be sent whole to the single available node

>> rendering

Assertion failed: nbytes == sizeof (dummy) (/usr/src/ports/zeromq/zeromq-4.1.4-1.x86_64/src/zeromq-4.1.4/src/signaler.cpp:303)

Assertion failed: Aborted

Hi,

I can reproduce this - and thanks for reporting on GitLab too.

This seems to be a Windows/Cygwin specific issue, in that it doesn't occur with OS X and Linux. There's some discussion going on at ZeroMQ with a similar issues, at least one of which looks like it has been patched in the last few days, but that's not in the 4.1.4 release that's part of Cygwin atm.

https://github.com/zeromq/libzmq/issues/1808

https://github.com/zeromq/libzmq/issues/1930

ZeroMQ is used for an upcoming feature - remotely interacting with the render - which hasn't been released yet, but it's working well in development. I'm going to disable ZeroMQ for the moment until the issue is fixed, so the script is usable for you, since it's not needed currently - I'll commit that shortly.

Thanks for the report.

S

The experimental branch now allows the creation of heat maps with 'dtr.py --map', which shows the relative render times of blocks across the whole image. The heat maps are greyscale, mapping black to white as fastest to slowest block render times respectively.

These can be used to help to identify areas of the image that are slow to render, giving the opportunity to investigate and optimise slow regions.

https://gitlab.com/skororu/dtr/wikis/Heatmaps