Will Jackson, Director at Engineered Arts Limited writes:

Background

RoboThespian started life in 2005 as a rudimentary 'robotic puppet', and over years of intensive experimentation has developed into a sophisticated and complex creation, drawing on the talents of dedicated engineers, designers and organisers working to continually improve software, electronics, mechanical design and conceptual development.

It is at once, both an artistic endeavour and an advanced engineering project.

As of February 2010 Engineered Arts Ltd employs 8 people who spend the majority of their working days involved with the perfection of an 'acting machine'

Why use Blender?

In the early days we programmed robot movements using graphs (like IPO curves) to generate robot movements - it was difficult and not at all intuitive, Try animating a character in FK only with no image of what your doing on screen to get a feel for how hard this is. We couldn't really do it 'off line' at all which meant having a real robot hooked up to see what you where doing.

With a background in 3D animation I knew there where easier ways, but we didn't want to get into using a closed source licensed application. First because we couldn't extend the code for our special purpose, very easily, second because we wanted to include the programming software for our users at no cost. And Third because we couldn't justify the time and expense to write the application from scratch.

About three years ago a friend showed me a Blender animation project, and I realised immediately that we had the answer to our dreams.

It has taken some years and a fair bit of learning to perfect the RoboThespian-Blender link, it now works pretty well.

How we do it.

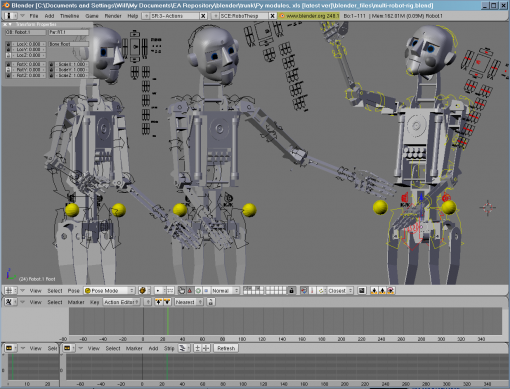

Our virtual RoboThespian model is rigged for IK and FK with a control to mix the two methods if desired, as not all the robot features have bones, (eye graphics, face colours etc) we also have some floating slider and dial controls that can be animated for those features. A Python script creates an array of all the necessary data, and sends it over a TCP socket connection to our back end software.

The movement and position data are stored in a MySQL DB and can be replayed by the robot at any time - so once programmed Blender is not needed to make the robot work.

We have also developed a way of 'blending' multiple robot motion routines after they are recorded. For example the robots mood and movements can change on the fly by mixing and modifying pre set behaviours. We are also working on a virtual RoboThespian model that can be driven in reverse, like using a motion capture rig.

We had real trouble getting the relevant joint data in the right format, which needs to be a relative Euler angle resulting from the combo of the IK and FK inputs - not the bone rotations. This turned out to be as simple as multiplying the inverses of the quaternion matrices - doh!

Many thanks to Arne Laub, Jon Topf and Glen Pike for the months of work they put into the project. And to Cery's Marks for the great RoboThespian animations she creates.

What's coming next?

One of our current projects involves three RoboThespian robots working together on stage, with theatre lighting and projected video mixed in.

We have a Blender model to control the whole set up including virtual models of MAC 250 moving head lights. We can animate the whole show virtually and it plays back on the real hardware just the same way.

Link

30 Comments

Wow, pretty cool. I like seeing nontraditional uses of blender.

(first)

amazing. blender is being used more and more for creative things

This is pretty awesome, but it would be way cooler if it was mounted from the hip, leaving the legs free to move, and on a moving platform. That way, an entire stage production could be animated in Blender!

Omg, this is f*cking scaring! But it's pretty ingenious too.

My God, man! He's not wearing any pants in front of those children...

and worse, the woman on the left is taking a photo...

Great ! This is excellent ! Thanks to use Blender ;).

Absolutely brilliant! I've subscribed to their channel.

In the video it looks a bit like cg, so I thought maybe it's a hoax :).

I'd rather they put on a few more "realistic" videos of it, in some real-life context, because with the current omnipresence of CG a lot of people might ignore it thinking it's just another animation.

That is seriously cool! I hope he knows the three laws! ;-)

Is the model available for download?

Very nice use of Blender indeed. There seems to be a lot of robotics activity around blender, which shows off a strong point of Blender: the source is available to play with.

pretty cool, but too bad he can't seem to walk.

Awesome :D

@Albinal: Do you know your ten(*)?

(*) http://www.usccb.org/nab/bible/exodus/exodus20.htm

Looks fascinating, and I haven't seen the video yet (evil dial-up slowness).

I love how this article shows the practical value of open-source.

Nice! Now make this robot talk with Ton's voice : ))

Great showcase for Blender!

wow, thats really cool!

where can i see one?

Ah that's great, old school and new school combined!

I prefer living actors. What I would like to see is using this to provide a foundation for open source animatronics. While this is impressive, I really don't care for the idea of a full robot production. If you don't mind seeing the same performance over and over again I guess this is ok, but the constant flow of creativity and the improv in response to the accidents and mistakes that happen on stage is what makes a play worth seeing.

A Robo-theater would be great! This idea and technology could be transported to small toys. So you can program your own robot. Or send a file to let someone else robot do something funny...

@nobody "@Albinal: Do you know your ten(*)? (*) http://www.usccb.org/nab/bible/exodus/exodus20.htm"

Why do you feel the need to make holier than thou comments to a mildly humorous reference to Asimov's 3 robot laws, peddling your religion, for all you know Albinal could belong to one of the many religions that don't recognize "The" Ten Commandments. Comments like these may score you points on bibleblog.com but here you come across as a pompous bible banger who thinks everyone but themselves is without religion / faith which is nothing short of ridiculous. You should take some time to reflect on yourself before singling out others like Albinal for your judgement of character based solely on their posts. BTW I'm praying right now that you revisit this blog or signed up for followup comments via email & see just how simple minded your comment was. Thanks & Have a nice day.

You think nobody was being judgmental?

I see his logic, and I would say both are "mildly humorous."

Hopefully the robot doesn't train an army of chickens. :)

well.... where is his wife ;))

great job

It is seems like a dream...

Blender has a fresh start with this,

It is very nice to see people using blender

seriously.

That changes a lots from a couple of Jockers who try

to make movies.

I am enjoying every single second of your video

and keep thinking : Ohh my god ! That is pretty heavy and serious stuff.

Thank you very much indeed.

Ok we are nearly there why not making a movie?

I can see it from where I stand.

RH2

"You think nobody was being judgmental?

I see his logic, and I would say both are “mildly humorous.”

Hopefully the robot doesn’t train an army of chickens. :)"

That would be interesting but not as creepy as Craig Fergeson's Robot skeleton army.

And on the topic of laws, If I remember correctly the "ten" are a summery of 316 OT laws. These are even further compressed by Christ in to two (http://niv.scripturetext.com/mark/12.htm [vs 28-31]). My question is who would a robot consider as a neighbor, your toaster oven or pocket calculator perhaps?

Regarding my earlier post, I should have read the actual site before posting, but I still believe that live is better. I would however like to see an open source model that could be used as a foundation for making your own animatronics for theatrical or film productions.

Visitors to the new Copernicus Science Centre in Warsaw, Poland will be able to see three RoboThespians acting in a Stanislaw Lem play (in Polish and English) when it opens in Summer 2010.

We have a Blender model to control the whole set up including virtual models of MAC 250 moving head lights. We can animate the whole show virtually and it plays back on the real hardware just the same way.

@Engineered Arts:

Will I be able to add animations for doing my chores and play them?

Also, if you want the robot to adjust to environment (walking without falling, you can't just play an animation), there is some nice Free and Open Source software here: http://www.willowgarage.com/pages/software/ros-platform

Imaging what Blender+ros-platform could do! It would be fantastic to be able to couple them. Using blender for managing, simulating, debugging also those stuff.

Scary, hands look a bit like terminator robots skeleton hands.

We need more than three laws, here are three new ones to be added, now we have six:

http://www.sciencedaily.com/releases/2009/07/090729155821.htm

Citation, fair use:

here are the three new laws that Woods and Murphy propose:

* A human may not deploy a robot without the human-robot work system meeting the highest legal and professional standards of safety and ethics.

* A robot must respond to humans as appropriate for their roles.

* A robot must be endowed with sufficient situated autonomy to protect its own existence as long as such protection provides smooth transfer of control which does not conflict with the First and Second Laws.

Definitely on my Christmas list! Very smart to use compressed air for some axes; saves a bunch on the cost, weight, and complexity. With two car batteries supplying 24v and a SCUBA tank and regulator for air, Andy could really go places - literally! Great job!

Love the robo-thespian. I always like to see unique uses of blender. Interesting that this is coming from England. Usually this kind of technology comes from Japan.

@somebody (and nobody): Wow, what a lot of vitriol. Perhaps nobody just didn't understand that the 3 laws were an Asimov reference and not a religious one.

If somebody is ok...nobody is wrong? xD

Now we will see a new robocop movie, and one more of chucky.

This is just the start of a new wave.

The benefits of using Blender, Python and MySql are that a huge number of variations, themes and reactions can be programed and stored so the audience may never see the same display twice.

It's a brilliant concept, Congrats and cudos to all involved.