Oak Ridge National Laboratory in Tennessee is using Blender on their 300,000 core Jaguar supercomputer for scientific visualisation.

Mike Matheson reports:

At Oak Ridge National Laboratory in Tennessee, the largest computing complex in the world devoted to computational science, Blender is used to support scientific visualization. Currently, three large liquid cooled Cray systems are located at the site in a half-acre computer room. The Department of Energy's Jaguar XT5, the University of Tennessee's Kraken XT5 in support of the National Science Foundation, and the National Oceanic and Atmospheric Administration's Gaea XE6 provide the leadership computational resources.

Blender runs on the Supercomputer which is Linux based. At least, most of the renderer does – we don’t build the player or game engine or features we don’t use.

Currently, Jaguar is being transformed into a new Cray XK6 which will be renamed Titan. When the last upgrades are completed, there will be 299,008 AMD cores and 600 terabytes of memory on Titan along with thousands of nVidia next-generation Tesla GPUs. Titan and the other two systems will total more than 500,000 cores and have roughly 1000 terabytes of memory when completed. The disk infrastructure with tens of petabytes of high-bandwidth storage is critical to support the systems.

Blender is well represented by the work done by visualization members at the Oak Ridge Leadership Computing facility. In fact, at this years Scientific Discovery through Advanced Computing (SciDAC) Electronic Visualization Night, five of the 12 awards went to ORNL researchers and all were done with Blender. This competition is an annual event with entries representing visualization work from National Laboratories of the United States, Universities, and other visualization groups.

Here are just a small subset of various examples of Blender use from scientific datasets at ORNL.

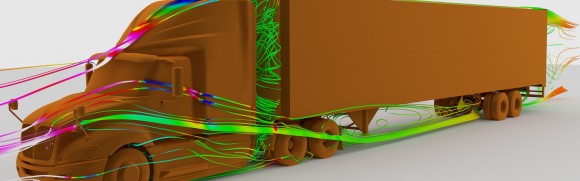

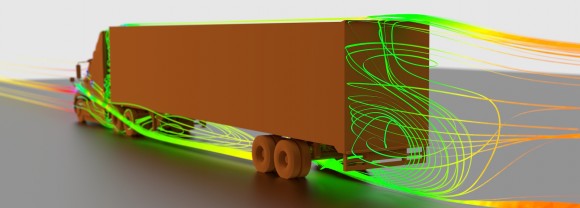

Computational fluid dynamics simulations to aid in the design of fuel efficient trailers for semi-trucks rendered with Cycles:

High speed shock wave / boundary layer interactions from computational simulations.

Magnetic Field Outflows from Active Galactic Nuclei

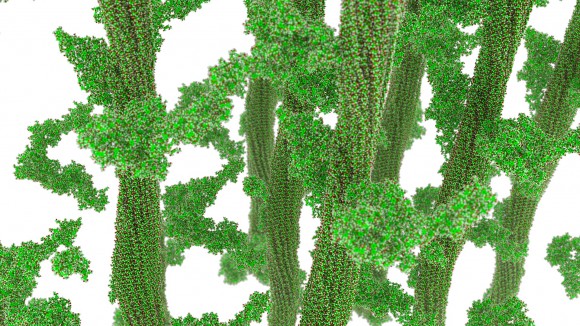

Over 500 million polygons show simulation of processes related to the efficient production of ethanol from cellulose as part of the US energy-policy goals:

Blender runs on the Supercomputer which is Linux based. At least, most of the renderer does - we don't build the player or game engine or features we don't use. We normally don't render on it simply because it is busy and we have our own clusters with thousands of cores available. The way we normally use any of the compute resources is to generate frames in parallel. We assign 1 - N frames per node and use 100s of nodes simultaneously. We tend to try to keep the maximum time to render a single frame at less than 1 hour and usually at around 20 minutes. Since we run this way we don't really exploit any high speed interconnects like those available on the Cray which is another reason we don't typically use it ( it's too valuable to people who need the interconnect ). We use Maya/MentalRay as well but because of license restrictions we can never match the sheer horsepower that we can employ with Blender. So our unique situation really makes Blender a great tool for us.

We use Maya/MentalRay as well but because of license restrictions we can never match the sheer horsepower that we can employ with Blender.

So a very typical render would be to generate 60 seconds of animation at 24 frames per second for 1440 frames. I'd take 128 nodes of roughly 16 cores each ( 2048 cores ) - I'd get back 3 x 128 frames every hour so in less than 4 hours - I'd have 1 minute of HD animation. So it is possible to generate 60-90 second clips in a night without requiring a lot of resources ( compared to what we have anyway ). However, from the number of nodes we have you can see that we could render many minutes of video in less than 30 minutes if we needed to do it. We ( visualization/scientists) are the current bottleneck since we have vast amounts of computing resources. Usually these short clips are sufficient for the needs of most scientists. The most cores that I can think of using simultaneously is probably around 7500-8000. It was a case of rendering the exact same scientific data set with 3 or 4 different cameras.

The most demanding use of Blender we have is for presentations on Everest. Everest is a 35 MPixel powerwall and we do render a select few animations at this resolution which is 17x higher resolution than HD (1920x1080). These frames are absolutely brutal and every rendering artifact will be visible so it takes a lot of care in creating them. These frames take a long time to render and this is the sole use case where we have used many nodes to render single frames - although we still usually will just render 1 frame per node. If we are doing stereo - double the effort.

![bRoomComputersPass0751[2]](https://www.blendernation.com/wp-content/uploads/2011/11/bRoomComputersPass075121-580x378.jpg)

57 Comments

HAH! That's pretty much a geeky dream come true ... pretty slick.

Bet it run cycles really well to :P

Not much of a supercomputer if it dident. :P

As the articles mention - they have Tesla GPU. So obviously they can.

how fast would they have rendered BBB on that bad boy!?

I must admit that I didn't understand a thing but I still think that this is pretty cool.

Hehe, that comment gave me a good laugh. Thanks :)

Amen fot that!! :)

A fan of super computers (I'm into World Community Grid) I do understand. It's great to know this kind of thing is happening.

I got pretty similar response from my wife when I jumped around the dining room yelling about all the cores and terabytes of memory - "I don't understand a thing". But she didn't think it was cool. She said I was a King-Geek :)

Amazing, Blender rockz !!!

slap in the face for bill gates and his money-grubbing organization of pirates. haha :) i like Linux! :)

It's great to see Blender being used in such an environment.

Wow, agreed, that is every nerd's dream come true...

Oak Ridge is the birthplace of thorium nuclear power. This article is a collision of my two favorite worlds!

nerdgasm

nerdvana

I've got to remember that word. I get it quite often....

next project : using blender to simulate the universe ... in real time :)

The universe *is* the simulation. It feels like real time because that's all we know. It's actually a single person in a desert, manipulating rocks as bits, one at a time. http://xkcd.com/505/

I want to tell everyone I know about this because it's just so cool but they wouldn't understand me. Maybe in thirty years I'll have that kind of power.

And in a few years we'll have that computing power in our cellphones (or whatever these personal communication devices may be called). :D

Cool, Cause I live in Tennessee .... =)

They do tours, how about you visit them and prepare a cool report for the rest of us? :)

I didn't know they did tours. Oak Ridge is about 2 hours West of me. Maybe I'll head down there sometime!!! Awesome!

You should talk to Mike and get the Blender tour %^)

ah, you advertise on blender, and you have to allow project Mango to use your machines in the spirit of giving something back?

:)

TFS

Hahahaha... i would love to see that!

: D

the power of freedom

Huh? They have supercomupters in China and Iran too.

They probably aren't running Blender... ;-)

no, they runinng stuxnet

The third most powerful supercomputer in the world is using blender. Just pure awesome.

I think this quote fits here: "Now THAT'S a can of Epic Sauce!" :P :D

next year intel is coming with the new knife intelchip for PC with an equivalent of 50 processors inside

which will change the world of CG

this will probably also change the set up they have there at Oakridge!

so it's a field in constant motion

but interesting to see such a high class lab using blender for scientific rendering

happy blender

Thats really cool! :)

The biggest session we've had so far at Renderfarm.fi was done with the help of some 3000+ CPU cores (see http://www.renderfarm.fi/animations/2240). In case you guys at Oakridge would be interested in trying out our BOINC based open source back-end for your rendering needs please do get in contact.

Forgot to compliment on the super cool post. One of the most interesting ones in a while. I'm pretty positive that with the help of this material I'll be able to convince some partner universities to take a serious look at Blender for their visualization needs. Cheers :).

I got the chance to work at NERSC, the supercomputing center for Lawrence Berkeley National Lab and ran some Blender rendering on the Hopper Supercomputer. Awesome Stuff!

I bet that thing could run luxrender at 60fps!

I wonder... full real time rendering in Cycles... Is it possible with so many cores available?

It's interesting that these large, government funded labs have working conversions between Blender and tools like VisIt and ParaView. As a researcher who could sure use such tools, I really wish they were open-sourced!

Well, maybe they are.. I'll forward your question to Mike.

That would be great! :-) There are quite a few threads (on blenderartists, etc) about interop between "more typical" scientific visualisation tools and Blender, but often the conversion is limited to file transfer in some 3rd-party format like x3d.

Where's the tutorial? :P

Does anyone know how to do renders that just look like these, without actually being physically accurate?

Any donation from them for using Blender?

Cash or code?

Should they? How about contributing this amazing case study?

If they not... then of course they should! They spend lots of money (millions?). And I see ppl who ask around 15k for complete opencl compositing node solution. I see more... ppl who ask for 5 bucks for food. So imagine. They earning using Blender - they work on Blender - they ask for gov. and corp. money.

In my opinion the biggest flaw in OS is that, only few big players with real money Donate.

Case study is a different story of course is very interesting, but anyway they should donate, and they should be proud of that.

Indeed: donation to hire one additional full-time programmer at the Blender Institute!

I thought the 2nd picture was a motherboard!

Me too. Actually I didn't take a close enough look to realize it wasn't until I read your comment. lol

just curious guys what much does it cost?

Yeah, me too. I'd like to get one of those and put it in my bedroom to render out my blender projects...

Great article and most insightful as to how well blender can adapt to all sorts of environments.

It is possible at home useing PC and blender make "wind tunel" for aircraft test?

And how accurate it will be?

I am a beginner in blender and I have problem using boolean tool ,when I apply boolean with difference option to any object it provide the expected output as it should be in 3d model but when i render that model , those faces where I applied boolean does not look proper . Can anybody help me to solve this problem, ??

Hi - I'm new to blender - I'm trying to construct a jet aircraft model, or use an existing one. The model should have accurate dimensions. Can anyone point me to a mailing list or site that may be appropriate? Thanks! - Hal