Behind the Scenes: Kaspersky Space Security, part 1

About me

Hey there! My name is Sergey and I am a CGI artist from Novosibirsk, Russia. I love movies, video games, big fan of rock music and a singer in a band.

I started working in 3D in 2017. Actually, long before that, sometime in 2007, I tried to learn 3D using a huge and boring book about 3ds Max 9. It was so long ago that nobody even thought to upload video tutorials on YouTube. At that time, I worked as a web designer in my hometown, Novokuznetsk, and there was no way to earn a living with 3D there. So the interest was lost, and I turned more to creative retouching with a bit of graphical design. At some point, I found that without 3D, my skills as a CGI artist were quite limited. So I started from scratch with Cinema 4D for modeling and 3ds Max + Corona for visualization. At that time it was the studio’s standard pipeline. I learned from YouTube tutorials and for a couple of years, I tried almost all 3D software and the most popular render engines—except Blender. And then Blender 2.8 Beta walks into the bar and I was like: “Wow! How come that one tiny piece of code can do everything I need, loads of what I didn’t know I needed and looks so damn fine?!”. Later, in April 2019, it officially became my main tool for most tasks.

[sponsor id=’qarnot’]

About the project

This project started at the very end of 2019 with the Feel Factory Agency, where I currently work, and lasted until March 2020. It is founded on the concepts of the Possible Agency. Three key visuals were created and the third is literally a re-work of the first. The production pipeline includes me, Blender, and Photoshop.

I’ll divide this post into 2 parts according to two different concepts. The first one is a landscape scene and the second will be a rocket.

Landscape

The usual approach for landscape scenes is to use matte painting. But, as often happens in advertising, we decided to try something different. I actually love matte painting despite the fact that I’m not very skilled in it but it has a component that I hate the most: browsing stock photos for hours in search of a perfect sample. It really drives my nuts. So I decided to go with a scene from Megascans instead and later use the rendered image as a base for matte. Also, Megascans have a grand advantage—you cannot rotate Rock.jpg and get another rock, right? :)

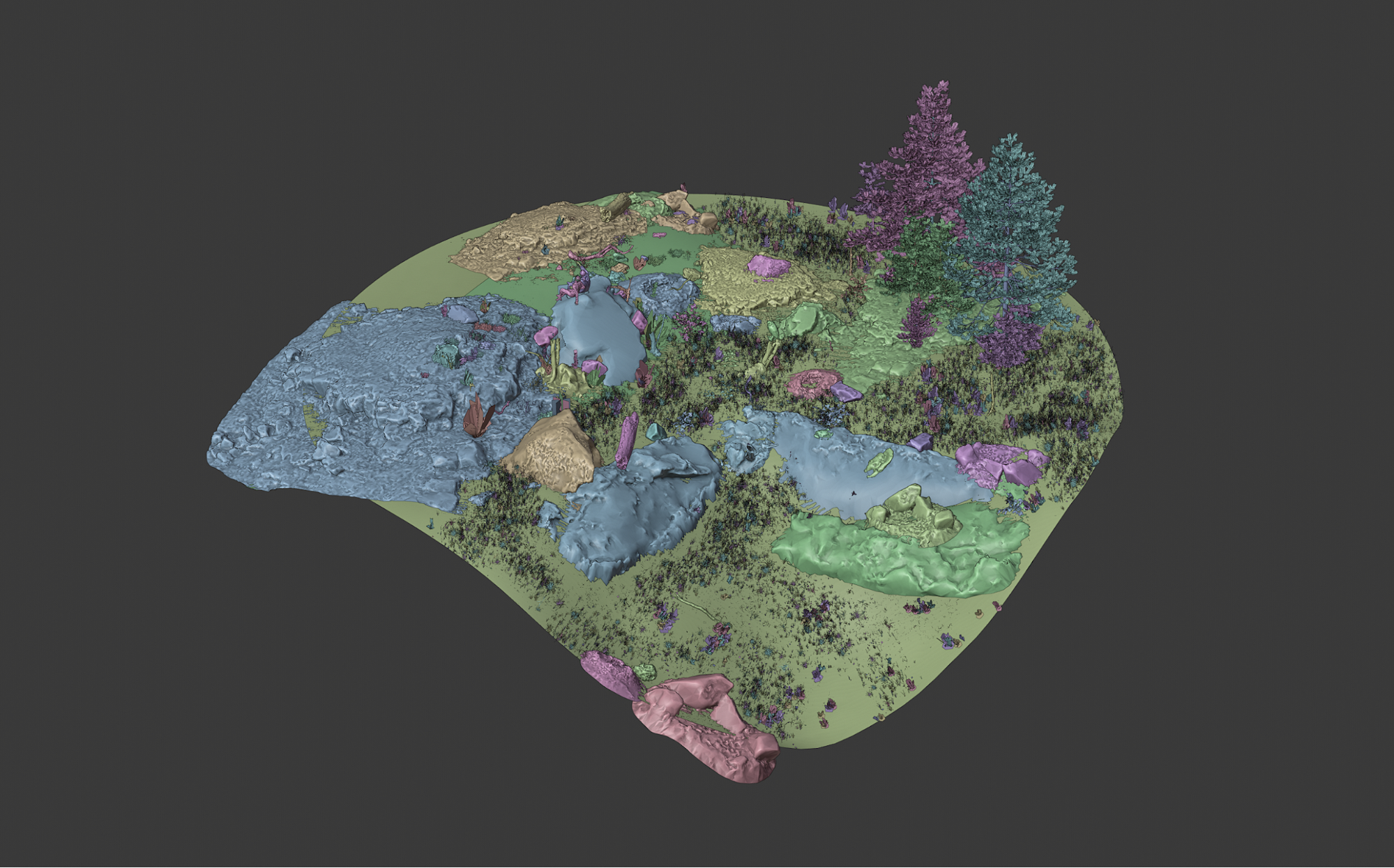

I had a rather simple sketch of a scene made by an agency so the first step was to get a more detailed image with a proper atmosphere. So I collected references and started to combine some rocks and grass samples, alpha planes for clouds, roughly posed human models, lit the scene with a tinted HDRI and single overhead light source, and rendered it with EEVEE. By the way, EEVEE is a huge thing in the early stages of most of my works. Irreplaceable on iterations.

Most of the subsequent steps are quite simple. Check the reference, add more rocks, add more grass and debris, some tree trunks, tune the light setup, repeat. The mountains in the background and the starry sky are also alpha planes with a photographic texture. I tried to simulate the fog in the middle ground, but it cost too much in terms of performance, so I decided to add it in post-production.

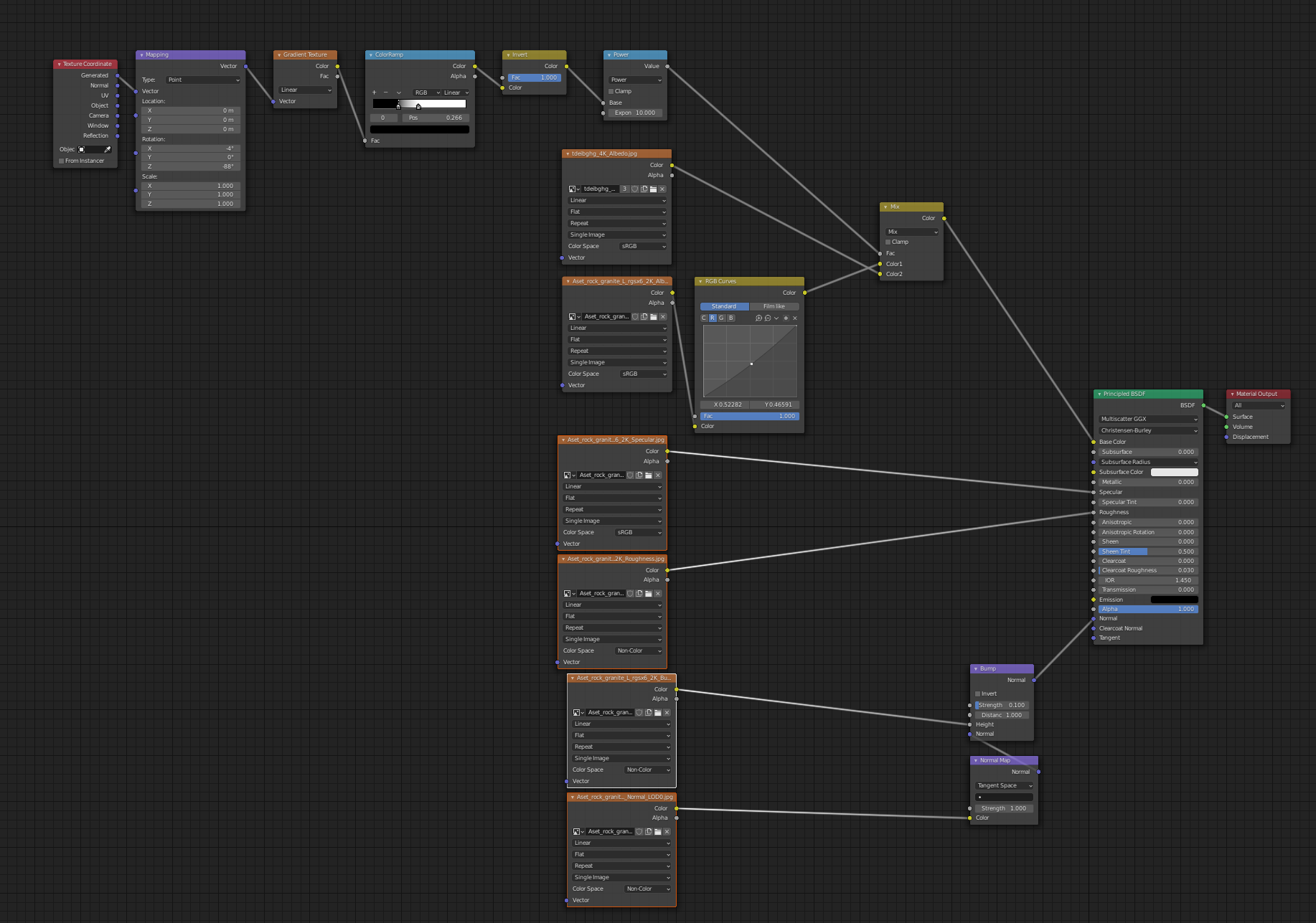

To mix the texture of the ground plane and the assets, I used a simple node setup based on the Gradient Texture Node used as a mix factor.

It was important for me to make my characters look realistic. Their poses looked unnatural and the textures were quite poor. So I took the existing head and hands from the male character and the female character’s hair and combined them with 3D scanned models from stock. In order to fix the poses, I used a single keyframe cut from a Mixamo animation sample. Then I tweaked some details to fit them onto the surface.

The final image was rendered with an RTX 2060 at 4800×2700 px using the following settings.

Now when the render was ready I could do some of my darkest sorcery: post-production! I’ve included here a WIP video but will describe the process in a few words.

As I am not a very experienced environment artist, I decided I could do a trick and added fine details by compositing the scene in Photoshop. Luckily, my stock images perfectly fit with the light and camera angle. These additions made my scene 50% more realistic. I also replaced the background mountains and sky with original images. They were more colorful and easier to edit. Constellations were based on 3D meshes. I drew the main edges with a sharp brush, masked in particular areas, then added some stardust with a scattered brush. I marked vertices with a single star and surfaces with large star fields to add some volume to shapes.

Looks like it’s finally done, but no. I managed to start and to finish the second key visual with a rocket launch pad and then things turned 360°. Literally. The concept of a landscape scene evolved into a panoramic image over the course of a month and a half. Haha, who’s got two thumbs and freaking loves to start projects from scratch!? Yeah, it’s really annoying, but there’s one good thing I’ve learned in this industry. No matter how well you did the job, the second try will always be significantly better.

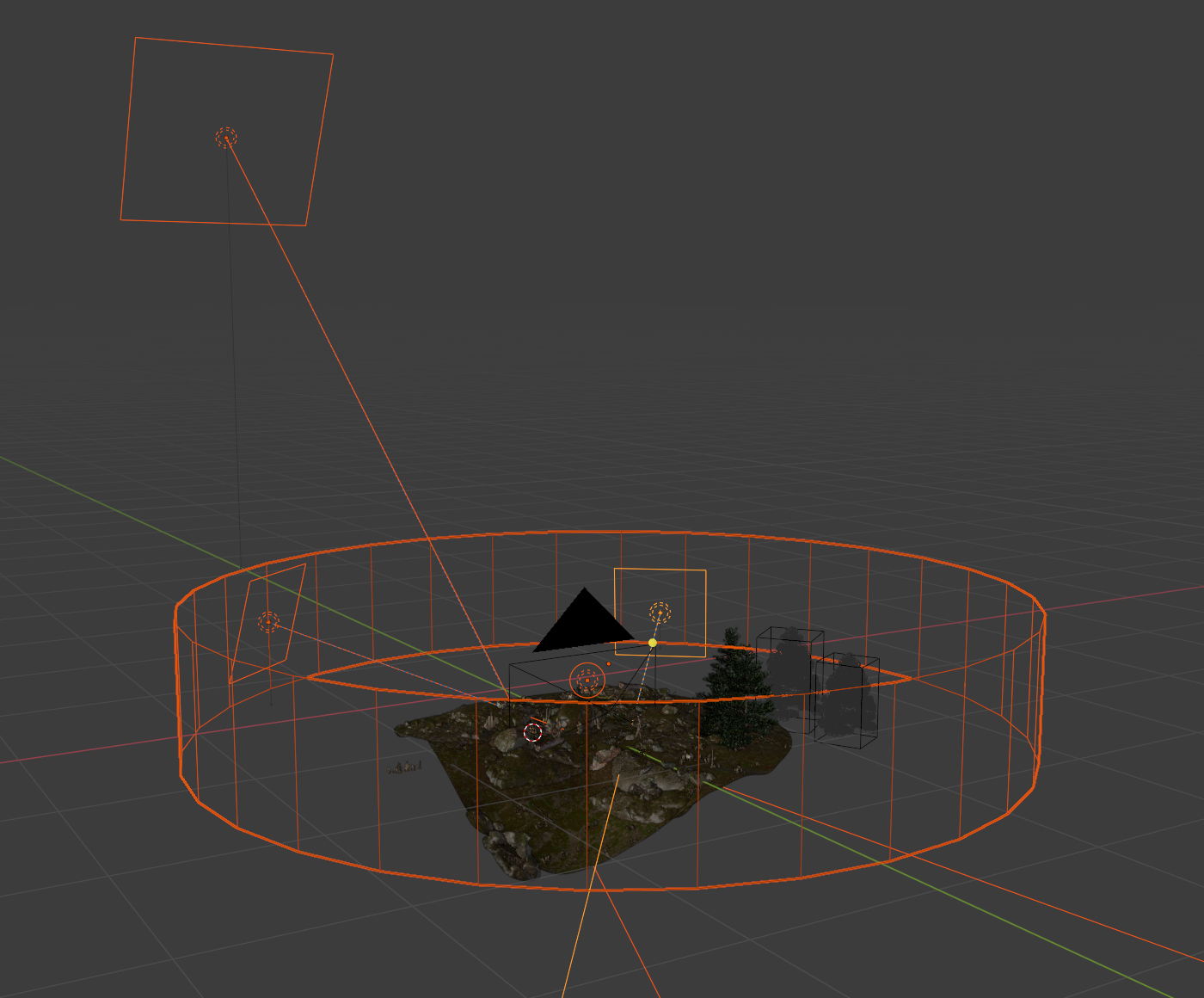

First, I found out that panoramic rendering in Blender is a piece of cake. You just need your camera in the center of a scene, its lens type set to Panoramic with the panorama type as Equirectangular.

According to the concept, my scene must contain 3 “screens”: the one with characters that was practically done, the second with trees and an owl, and the last with city lights below. To make the process of scene building more comfortable, I changed my camera type back to Perspective and set the field of view to 120°. Then I changed back to Panoramic. It helped to be able to see exactly one-third of a scene in the viewport by rotating the camera at 120° intervals but with renders in Panoramic.

As my source scene was expanding 3 times, it definitely required optimization. I solved it by using the Decimate modifier to reduce polycount and the Mask modifier to hide parts of the meshes that were invisible to the camera.

The process of filling the scene was almost the same except this time I needed to place some quality grass and trees. The previous version of the landscape turned out quite dark and brutal. At least, I thought that was the way it should look. This time I decided to make it a bit more colorful. In addition, grass helped me to hide my ground imperfections and the lack of details in the foreground. For the grass, I used the Graswald addon. It’s very easy to use and it has amazing grass and flower samples. For the trees in a second screen, I used Grove 8. The Owl and the campfire are 3d stock assets.

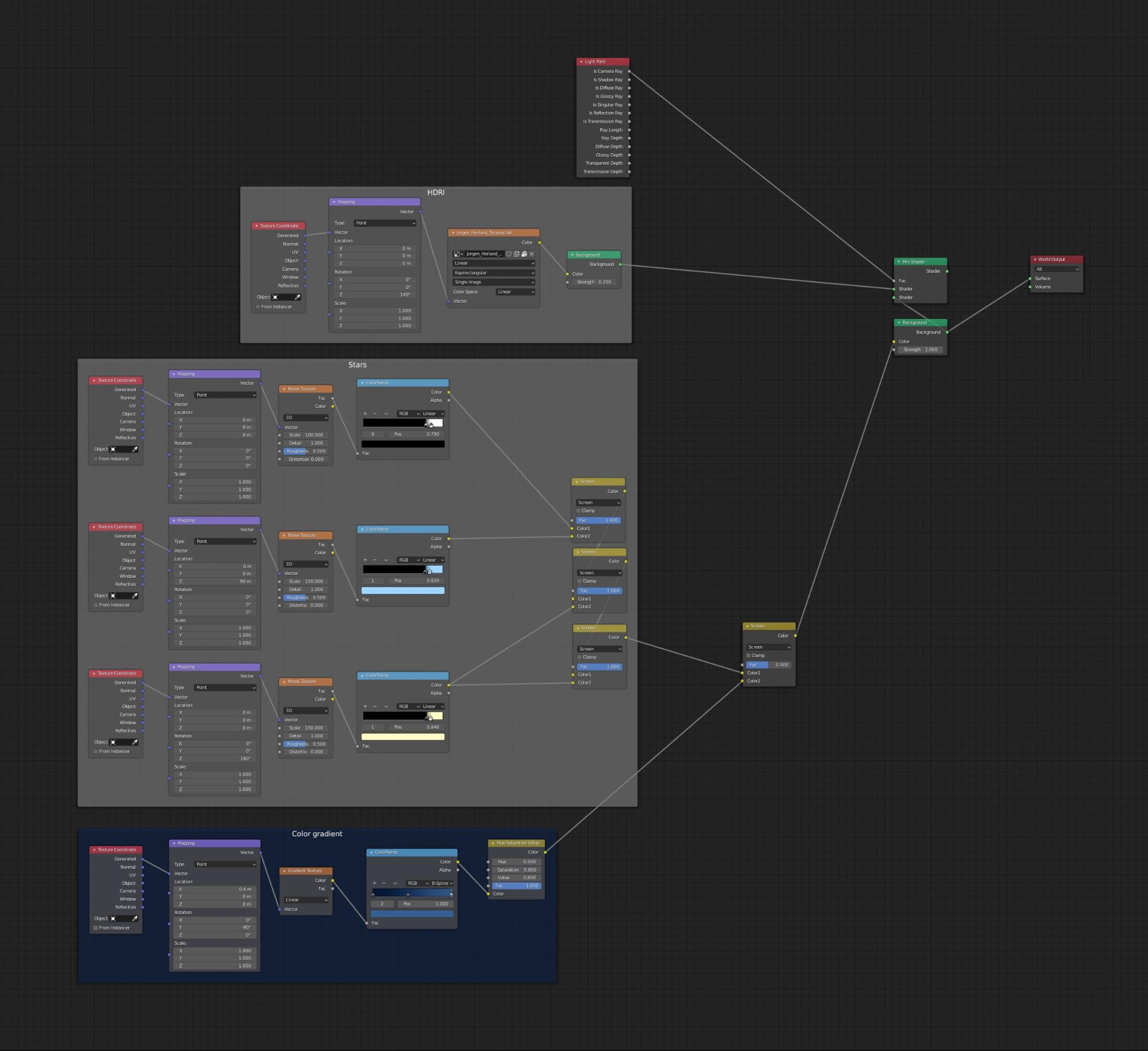

Since this time it was a 360° panoramic scene, I couldn’t just add a starry sky texture in Photoshop. It would cause visible seams and stretches at the top of the panorama. So I needed to generate it procedurally. This texture node setup combines with an HDRI map using In Camera Ray as a factor, thereby making it visible instead of the HDRI. However, I rendered it separately for more control at the compositing stage.

Again in order to make the new scene softer, I changed the HDRI image to daylight to add a warm tint. For the cold rim light, I used a cylindrical-shaped light source and three light planes to add an accent light on the characters.

So that’s everything on scene building. I rendered the landscape and sky texture at 11520 × 5760 with RTX on and was ready to composite it.

Here I used the same technique that I had used in the non-panoramic image, composing the rendered image with stock image samples for a more realistic result. Except that in this case, I couldn’t use this in the foreground due to the perspective mismatch of the source images. The background mountains, fog, fire, city lights below, and the fireflies were also added in compositing. Here is a WIP video to dive into the process.

About the Author

Really good job!

Wonderful work, ands thank you for sharing some insights and workflow! :)

Wonderful, thanks Sergey.